Welcome to the February 2026 edition of This Month in AI. This month’s research points to a decisive shift: AI is no longer an innovation initiative — it is becoming the operating backbone of the enterprise. Models are commoditizing, investment is scaling, and governance is maturing. What now separates leaders from followers is not experimentation — but execution at scale.

Here’s what’s shaping the conversation this month.

Bain & Company’s The AI Enterprise: Code Red is the clearest declaration that AI has moved beyond productivity tools and copilots into something far more structural: an enterprise operating system. Leading AI platforms are no longer positioning themselves as assistants that sit beside workflows. They are becoming orchestration engines capable of multistep, goal-directed execution across core business systems.

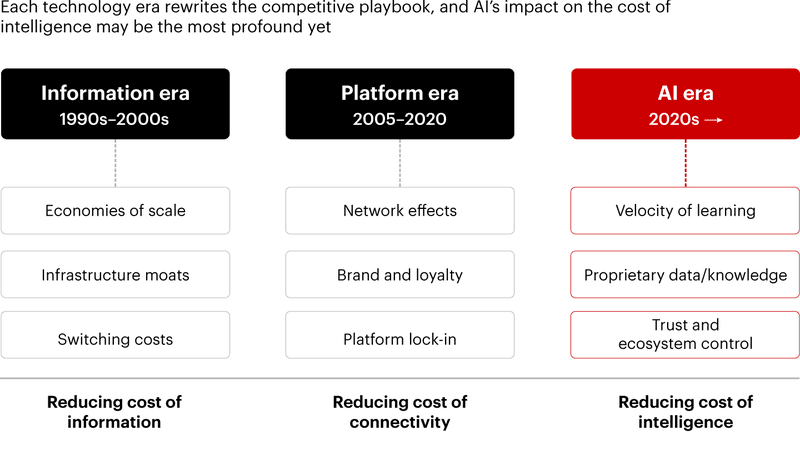

This shift has strategic consequences. When the marginal cost of intelligence collapses, the foundations of competitive advantage begin to move. Historically, advantage accrued to scale, network effects, switching costs, and capital intensity. In the AI era, intelligence becomes widely accessible infrastructure. As that happens, those traditional moats weaken.

Bain identifies new assets of advantage: velocity of learning, proprietary knowledge layers, trust-based ecosystem control, and the ability to industrialize AI agents through repeatable “agent factories.” The firms pulling ahead are not experimenting at the edges. They are redesigning high-value workflows, redefining roles within hybrid human-agent systems, and institutionalizing governance structures that allow AI to scale safely.

Importantly, Bain reframes AI transformation as an operating model redesign rather than a technology program. Workflow modernization must occur in parallel with workforce modernization. Governance must mature alongside autonomy. Scaling must follow deliberate patterns, not scattered experimentation.

The signal is unmistakable: AI is not an incremental capability. It is infrastructure. And infrastructure rewrites competition.

If AI becomes foundational infrastructure, then differentiation must come from somewhere else. Harvard Business Review’s When Every Company Can Use the Same AI Models, Context Becomes a Competitive Advantage addresses this directly. When frontier models are broadly available, no enterprise can rely on exclusive access to intelligence as a defensible edge. The advantage shifts to context.

Context includes proprietary data, embedded domain logic, historical performance signals, and workflow-level knowledge accumulated over years of operation. It is the structured memory of how an organization actually works. When AI is wrapped in this contextual layer, it produces outcomes that generic implementations cannot replicate.

This is a profound strategic shift. In prior waves of enterprise technology, differentiation often emerged from access to superior tools. In the AI era, tools converge rapidly. Models improve universally. Capability spreads quickly. What remains scarce is deeply integrated context.

The implication is operational. Enterprises must move beyond API integration and begin embedding AI directly into their proprietary systems of record and decision flows. Context must be structured, accessible, and usable by intelligent agents. Otherwise, AI will flatten competitive differentiation rather than strengthen it.

In a world of shared intelligence, contextual integration becomes the moat.

If intelligence is abundant and context differentiates, what compounds advantage over time? In AiThority’s AI In The Signal Economy: Turning Noise Into Actionable Intelligence analysis of AI and decision velocity introduces the next layer: speed.

AI should not be understood merely as an automation engine. It is a signal processor. Modern enterprises generate enormous volumes of signals — customer behavior, operational performance, supply chain movement, market shifts. The ability to ingest, interpret, and act on those signals in near real time compresses decision cycles. Competitive position shifts from static assets to dynamic learning systems.

Check RapidCanvas’ Second Brain, an Enterprise Intelligence Assistant, that helps enterprises unify knowledge across all systems securely and at scale.

Organizations that close feedback loops faster accumulate experience more quickly. Their models improve at a faster rate. Their workflows adapt more fluidly. Their error rates decline more rapidly. Over time, this compounding learning velocity becomes a structural advantage.

This reinforces Bain’s experience-curve argument. Learning velocity matters more than scale. Small, fast-moving players with tight feedback loops can outperform larger incumbents that move slowly.

AI does not simply improve decision quality. It accelerates the rate at which enterprises learn. And in markets defined by continuous change, learning speed is the new moat.

The architectural consequences of this shift are equally significant. CIO’s SaaS Isn’t Dead — The Market Is Just Becoming More Hybrid provides a grounded counterpoint to alarmist narratives of a “SaaSpocalypse.”

Enterprise software is not collapsing. It is evolving. Core systems such as ERP and CRM platforms will persist. They will embed agentic capabilities directly into their architectures. At the same time, AI-native firms will continue to attack narrow workflows with specialized agility. The future is not incumbents versus startups. It is a blended ecosystem.

What changes structurally is the emergence of a new orchestration layer — an enterprise AI operating system responsible for coordinating agents, governing autonomy, and integrating intelligence across systems. This orchestration layer becomes strategically critical. Without it, enterprises risk agent sprawl, fragmented governance, inconsistent security controls, and unpredictable cost scaling. With it, they gain centralized visibility, interoperability, and disciplined oversight.

Pricing models will evolve alongside architecture. Seat-based subscriptions will increasingly give way to hybrid or outcome-based pricing tied to usage, performance, or value delivery. Buyers will gain leverage but face greater complexity. Total cost of ownership will depend not only on software features but on compute dynamics, integration depth, and governance maturity.

The question is no longer which application wins. The question is who owns orchestration — and whether the enterprise can integrate intelligence coherently across its ecosystem.

Architectural clarity becomes a prerequisite for AI maturity.

The final inflection point comes from finance. PYMNTS’ What Happens When CFOs Get Serious About Gen AI signals that generative AI has moved decisively into the core of enterprise operations. CFOs are embedding AI into financial reporting, working capital management, treasury, risk, and compliance functions — areas historically resistant to automation without strict oversight.

Operational friction is declining. Early concerns about errors, integration challenges, and maintenance costs are shrinking as experience compounds. The remaining concerns have migrated upward: talent readiness, data governance, vendor concentration risk, and security.

Most importantly, ROI thinking is maturing. CFOs are no longer seeking a single milestone return or focusing narrowly on headcount reduction. They are distinguishing between targeted use cases that generate near-term productivity gains and enterprise-wide integrations that deliver longer-term margin expansion, capital efficiency, and revenue growth.

AI is being evaluated as infrastructure investment — not as a short-term cost play. This shift matters. When finance moves from curiosity to capital allocation, AI becomes institutional. Budgets align. Governance strengthens. Accountability formalizes. Scaling accelerates. Transformation stops being aspirational and becomes measurable.

The AI divide in 2026 is not about access to models. That advantage window is closing. The divide is about operational maturity. Enterprises that redesign workflows, embed contextual intelligence deeply into proprietary systems, accelerate feedback loops, establish orchestration governance, and apply disciplined capital allocation will compound advantage. Those that treat AI as a feature layer or experimentation sandbox will struggle to scale impact.

The strategic question for 2026 is not whether to adopt AI. It is whether the organization is prepared to redesign itself around it.

That’s it for the February 2026 edition of This Month In AI. We hope you enjoyed the read.

Sign up for our newsletter to get this curated list of AI articles and more AI insights from RapidCanvas delivered straight to your inbox.